9.8.2019

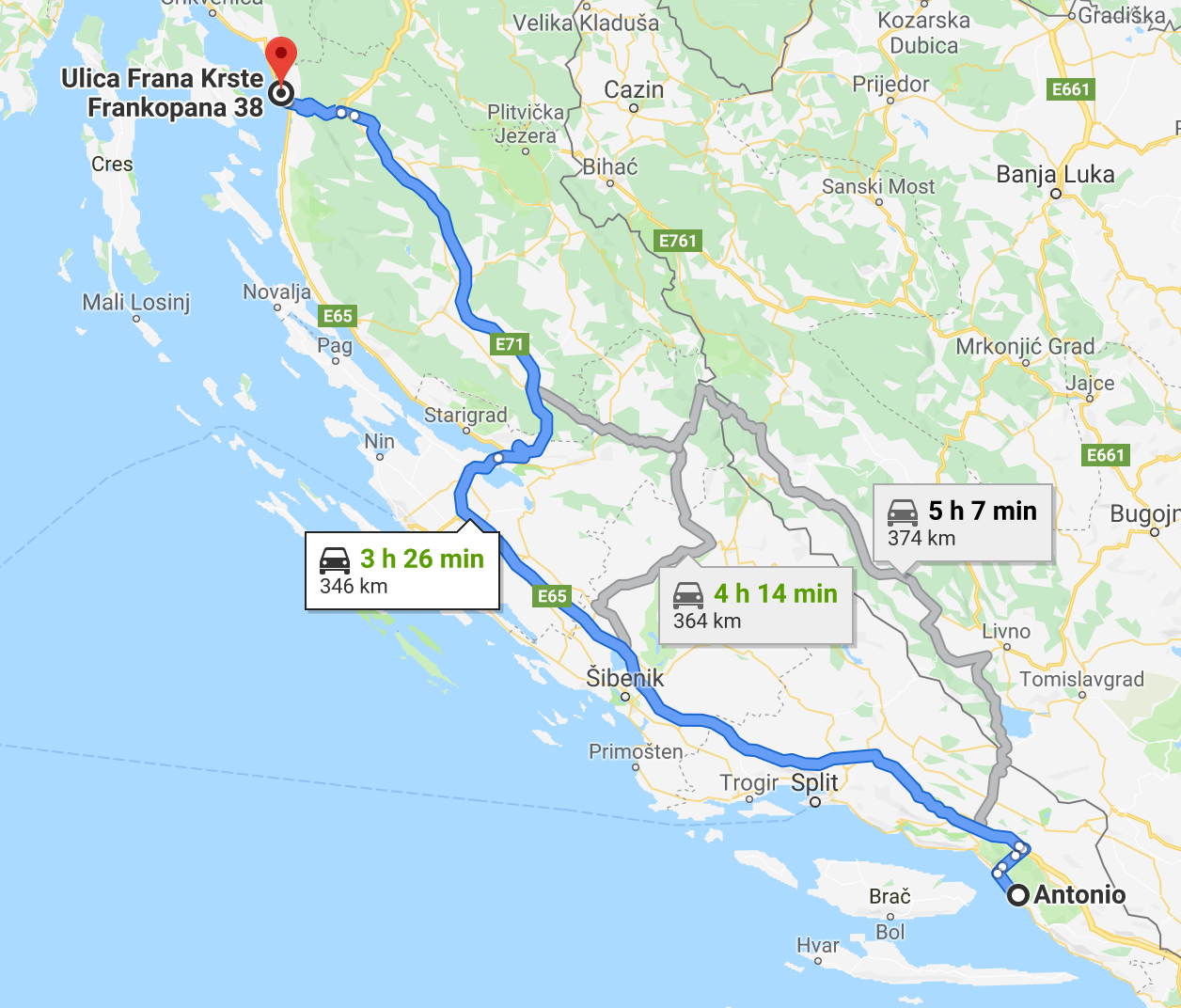

Hier unsere heutige Route (die 2h 17min sind eine LÜGE!!!):

Vorab müssen wir gleich mal sagen: wir haben uns heute spontan dazu entschlossen, doch nicht in Dubrovnik zu bleiben, sondern gleich weiter nach Montenegro zu fahren.

Wichtig zu beachten ist der Begriff "Grenze" in der Überschrift. Denn dort haben wir in Wahrheit die meiste Zeit heute verbracht. Uns wars auch noch wichtig in Kroatien zu tanken, weil wir nicht wussten, welche Währung Montenegro hat und ob man dort mit Kreditkarte zahlen kann..

Wenn man für eine Strecke, die normal 2 1/2 Stunden dauern sollte 5 1/2 Stunden braucht.. joa.. fein.

Alischa kam dann auf die tolle Idee, Christian einfach "A song of ice and fire" vorzulesen (doch cool, wenn man den Kindle mit hat und das Buch oben ist :)). Da ist dann die Zeit viel schneller vergangen.. plötzlich war ne Stunde um.

Man hat dann doch einen Unterschied von Montenegro zu Kroatien gemerkt.. die Häuser schauen doch etwas anders aus.. die Straßen sind bisschen schlechter und die Leute fahren einfach absolut irgendwie. Wenn ein einspuriger Kreisverkehr zu einem dreispurigen Kreisverkehr wird, weißt du, dass du aufpassen musst. Aus den Tankstellen sind wir nicht wirklich schlau geworden, keine Ahnung, wie viel man da fürs tanken zahlt und wie der Wechselkurs ist.

Wir wollten nicht die Fähre nehmen (warum eigentlich nicht? - Angst vor Neuem?) und sind deswegen die ganze Bucht entlang gefahren, bis wir dann endlich in Tivat angekommen sind. Oh, am Weg dort hin war einfach ein Flughafen! Und neben uns sind die Flieger gestartet!

Insgesamt muss man sagen, dass Montenegro doch auch sehr schön ist, vor allem eben diese Bucht. Aber leider sind wir an der vorbei gefahren :/. Tivat ist ein bisschen gewöhnungsbedürftig.

Wir haben schon im Hotel gesehen, dass die Preise der Minibar in Euro da stehen und fanden das ziemlich fein. Euro haben wir ja, aber um die Währung von Montenegro haben wir uns nicht gekümmert.

Wir waren auch noch essen und dort waren wieder die Preise in Euro angeschrieben.. voll arg. Scheinbar ist das so touristisch hier, dass man auch in Euro zahlen kann.

Irgendwann hat Alischa dann gegooglet.. Für alle, dies schon vorher wussten, hier die Bestätigung: Montenegro hat Euro! Obwohl sie nicht in der EU sind! Aber scheinbar dürfen sie keine eigenen prägen.. keine Ahnung, wie die dann ursprünglich zu denen kamen.

Ach und auch sehr spannend ist es, wenn man dann beginnt, Europreise in Kuna umzurechnen um zu erfahren, wie teuer/billig man verhältnismäßig zu den letzten Tagen isst.

Wow, ein in Wahrheit so ereignisloser Tag und trotzdem ist relativ viel Text zusammen gekommen!

Wenn morgen alles nach Plan läuft (und wir nicht wieder doppelt so lang brauchen, wie unser Navi sagt), fahren wir zum Skadar See. Die Bilder schauen ja sehr schön aus! Wir sind schon gespannt.